Your Solution Doesn't Know Your Problem Exists

If your problem is structural, your approach must be radical.

Evan Miyazono is the founder of Atlas Computing. This piece is his call to action for better field strategy and more scalable execution in the face of growing AI opportunity and risk.

(edit: I stealthily posted this without the email blast to subscribers because (1) I wanted to submit it to Manifund’s essay context with a very relevant prompt last Friday night, and (2) I wanted to do a heavy rewrite for succinctness before sending it to inboxes. But this essay won in its category, so I feel compelled to give it some distribution. 😅

I was recently at an invite-only workshop with 60 people. All brilliant, all ambitious.

We were there to help solve one of the biggest problems we believe is facing our species: in the next few years, amidst all the boons of more capable AI models, we’re heading for a world where millions of people will be able to direct cyberweapons beyond the reach of today’s spies; millions will be able to design bioweapons that could kill billions.

(I also frequent events focused on integrating AI into nuclear command and control, or risks to our ability to trust our collaboration and decision-making ability. Loads of fun to talk about at parties.)

Approaches like the (recently announced) $100M effort (joining other existing efforts) to give defensive cyber capabilities to open-source software may be too little, too late, ultimately too focused on too narrow a subset of civilization’s infrastructure.

There are strong proposals to create mechanisms to prevent dangerous uses of AI, securing various types of vulnerabilities against multiple threat vectors, creating multiple defensive layers; however, adopting the proposals in their current forms would require massive changes across entire hardware supply chains and could cost tens of billions of dollars.

I got up in front of the room to deliver some bad news.

There are six people in the world who could, in 15 minutes, set things in motion that would address all of your concerns.

None of them know you exist, some of them don’t even believe this problem exists, none of them give a damn about anything we do here today.

And it seems clear that no one here is trying to talk to any of them.

You’ve likely already heard that there are a billion dollars in your laptop and you just have to type the right words to get them out. It’s a provocative, simplistic statement that is also clearly true.

I think my bad news is similarly true: either you don’t credit the current technological revolution as being truly revolutionary, or you’re an AI doomer who thinks there’s nothing we can do to avoid catastrophic outcomes, or you understand that there are a certain number of calls, dinners, e-mails, workshops, white papers, and contracts that do get us from apocalyptic outcomes to great ones, because people are powerful and so are the technologies they wield.

There’s a lot (but maybe not enough) of humanpower and money chasing solutions, and I believe many people are approaching the problem of effecting change incorrectly; here’s what I recommend and why it’s worked for me.

The Excluded Middle

Humans are consummate tool-makers.

Organizations are themselves a kind of tool that, in our inventiveness, we’ve learned to create to solve problems. But even the best tools can limit our minds: once you have a hammer, you’re gonna have a hard time using screws instead of nails.

I worry that we, as decision-makers within organizations, are falling under the law of the instrument as we try to solve big problems.

Here’s what I mean:

VCs assume problems can be solved by startups.

Policymakers think things should be new laws, new taxes, new agencies.

Researchers look for research projects, because even sociotechnical problems need a proof-of-concept to derisk or illustrate capabilities.

And even the philanthropically-funded and non-profit organizations like mine have to color inside the lines of the tax code and our employees’ career trajectories.

That means we miss things. (Convergent’s President, Anastasia Gamick, recently wrote a related piece about how this limits our imagination when it comes to for-profit companies.) (Here “we” refers to an ambiguously-defined, self-identified set of organizations trying to improve the world faster and cheaper than what can be achieved deterministically by conventional means.)

There are a lot of entities in this space. Convergent Research and the various FROs, Speculative Technologies, FutureHouse, The Astera Institute, Renaissance Philanthropy, the Institute for Progress, BlueDot Impact, as well as my nonprofit, Atlas Computing, and so on.

Speaking for myself: I worry that all of us are, to some degree, incentivized to put up bigger walls around our particular fiefdoms, maximizing the capital and prestige of the problems we are dealing with. This makes the ecosystem more legible, in exchange making it less flexible or able to adapt to new types of problems. (For example, research funders issue specific calls around topics or subfields; every great researcher I’ve ever met could instantly name at least one project they would love to work on, but there’s never a relevant call for proposals.) This leads to a failure mode where problems that require a solution outside or spanning different fiefdoms remain unsolved; worse still, by survivorship bias, the most important problems will be these problems that don’t fit neatly into buckets.

Some examples:

We’re imbuing AI systems with expert knowledge in incredibly dual-use domains; but our controls, feedback loops, and safeguards against CBRN risks all hinge on the assumption that expert knowledge lives in expert humans. However, it’s difficult and illegal to kidnap, clone, or compel these experts to help you, and they’d try to call the FBI if you started down that path. On the other hand, free tools exist to do analogous things to these AI systems and they can’t or won’t invoke reactions from law enforcement. Who’s responsible for making sure AI systems won’t create catastrophic harm without crossing critical legal and privacy boundaries?

Who sets those boundaries, deciding what an AI system should (or shouldn’t) do? These systems are far more complex and less predictable than any other technology, so existing measures that reduce chaos caused by technology like procurement processes and standards are insufficient; conversely, our laws and institutions cannot be easily adapted to treat them like people. As a result, we default to nominating and arguing for which experts, organizations, or institutions should decide what AI should do or refuse, and this lapses into forcing new problems into fiefdoms built for old solutions. This looks very different from designing the best organization, ex nihilo, and then creating it.

How do we know that the AI agent you created is actually acting on your behalf, rather than someone else’s agent claiming to be yours? (And how do we fix the insecurity of social security numbers? One solution might answer both questions!)

These problems are challenging because they fall outside traditional domains and are, as-such, difficult to pose well within existing frameworks. They’re not quite Wicked Problems; it’s reasonable to imagine each has a solution, but everyone with nuanced understanding of the problem has some restriction (like a conflict of interest) that prevents them from proposing or pursuing a viable, timely solution. Therefore, these problems often become more challenging for anyone to solve because they are outside the domains, leaving no institution that could employ a would-be solver to do the work.

I founded Atlas Computing to map paths for society around the risks and toward the better outcomes that lie within a world with powerful AI. (I’ll call this role a Field Strategist; more on what they do below.) Before my work at Atlas, I built and ran Network Goods, a venture studio focused on building companies that provided novel mechanisms to evaluate and fund public goods and commons. Between those roles, over 5 years, I have identified technological gaps, recruited eight individuals to start a new company or nonprofit, take ownership over the proposed solution, handed off any progress that had been made, and introduced them to their first funder.

Focused on the most important and ignored problems, I have been consistently drawn into the role of fieldbuilding at the intersection of unusual fields. In those conversations, it was consistently clear that there were problems that not only were not being addressed, but that those problems could not be addressed by anyone in any role that was close enough to the problem to be aware of its existence. I had almost become numb to the refrain “it’d be great if someone would do X, but I don’t know who would” before realizing that Atlas Computing could be a living, compelling counterexample: an organization that actually became whatever it needed to be in order to fill that (meta)niche.

I joined Convergent to help design and launch FROs that would help prepare the world for powerful AI. I chose Convergent because this organization is the home of the most successful metascientific experiment of our age. Speaking just for myself: conceiving of a FRO might be (relatively) easy, but delivering enough successes for the NSF to launch the Tech Labs initiative might be the biggest structural change to science since the creation of (D)ARPA. I’m very excited to be here and to continue Atlas’s field strategy and organization-building work under the Convergent umbrella, and I expect some very interesting FROs (among other things) will come out of this work.

Convergent purposefully created the FRO, the Focused Research Organization, to contain, own, and solve some of the problems in the interstitial space. (You can read more about that here and here and here.) It was a new type of organization meant to solve an otherwise-excluded type of problem. Other institutional entrepreneurs have engaged in similar kinds of innovation in creating Advance Market Commitments, prediction markets, and forecasting groups.

I’m quite happy all this organizational innovation has happened. At the same time, it’s not enough, and we cannot just hope that more organizations in the current models will be able to solve the problems by being incrementally better.

Accordingly, it’s important that we consider creating new organizational forms to adopt orphaned problems. The intuition here is that one way to actually achieve systemic change is to find the leverage points to change the system’s behavior.

Michael Neilsen and Kanjun Qiu provide another intuitive path towards this conclusion in their piece, A Vision of Metascience:

Szabo writes of how, during the early Renaissance, exploring the oceans was an extremely risky business. Ships could run aground, or be blown badly off course by storms. Sometimes entire crews and ships and cargoes were lost. There were risks at all levels of an expedition, from the health and livelihood of individual sailors through to financiers who faced ruin if the ship ran aground or was badly damaged. But, Szabo points out, this risk profile changed considerably in the 14th century, when Genovese merchants invented maritime insurance: for the cost of a modest premium, the people financing the expedition would not suffer if the ship was damaged. This spread the risk, and made the expedition much less risky for some (though not all) participants. That change in the funding system helped enable a new age of exploration, discovery, and prosperity.

It is easy to imagine a salty Genovese sea captain, upon being asked how to improve shipping, saying that you “just need good ships, crewed by good sailors”. This would be in the vein of our scientist friends telling us “just fund good people doing good work”. It contains a large grain of truth, but is not incompatible with system-level ideas radically improving the situation. The salty scientists are correct, but only within a limited outlook. Research organizations do need to be maniacal about funding good people with good projects; they can also make system-level changes which have much more profound effects.

I hope at this point I’ve convinced you that we need new mechanisms and a new type of organization to find high-leverage interventions for interstitial problems. I also have a proposal for how that organization should work.

Give me a Long Enough Lever and a Field Strategist…The Care and Keeping of Good Problems

So, how do we find and fund the right people to adopt and care for an orphaned problem? (A line I heard at a recent conference: if you find a bottleneck – or an abandoned baby – you might not be to blame, but you should definitely consider yourself responsible.)

My proposed solution is to find people in society who are near-enough to the problem (either because they are close to it, downstream of it, or care for things that will be affected by it) and simultaneously identify and use creative mechanisms to align incentives between these people and create a properly-shaped solution to solve it.

That might sound tautological or boring, so let’s break it down a few nuances that I think are tripping people up now.

Roadmap what you think needs doing.

My loose mental model is that you could imagine a tree of macroscopically distinct futures, with probabilities distributed across all the branches — something like Tim Urban’s Tree of Life, but for civilizational trajectories. If you want to shape the future, your first job is to decide how you want to influence those probabilities.

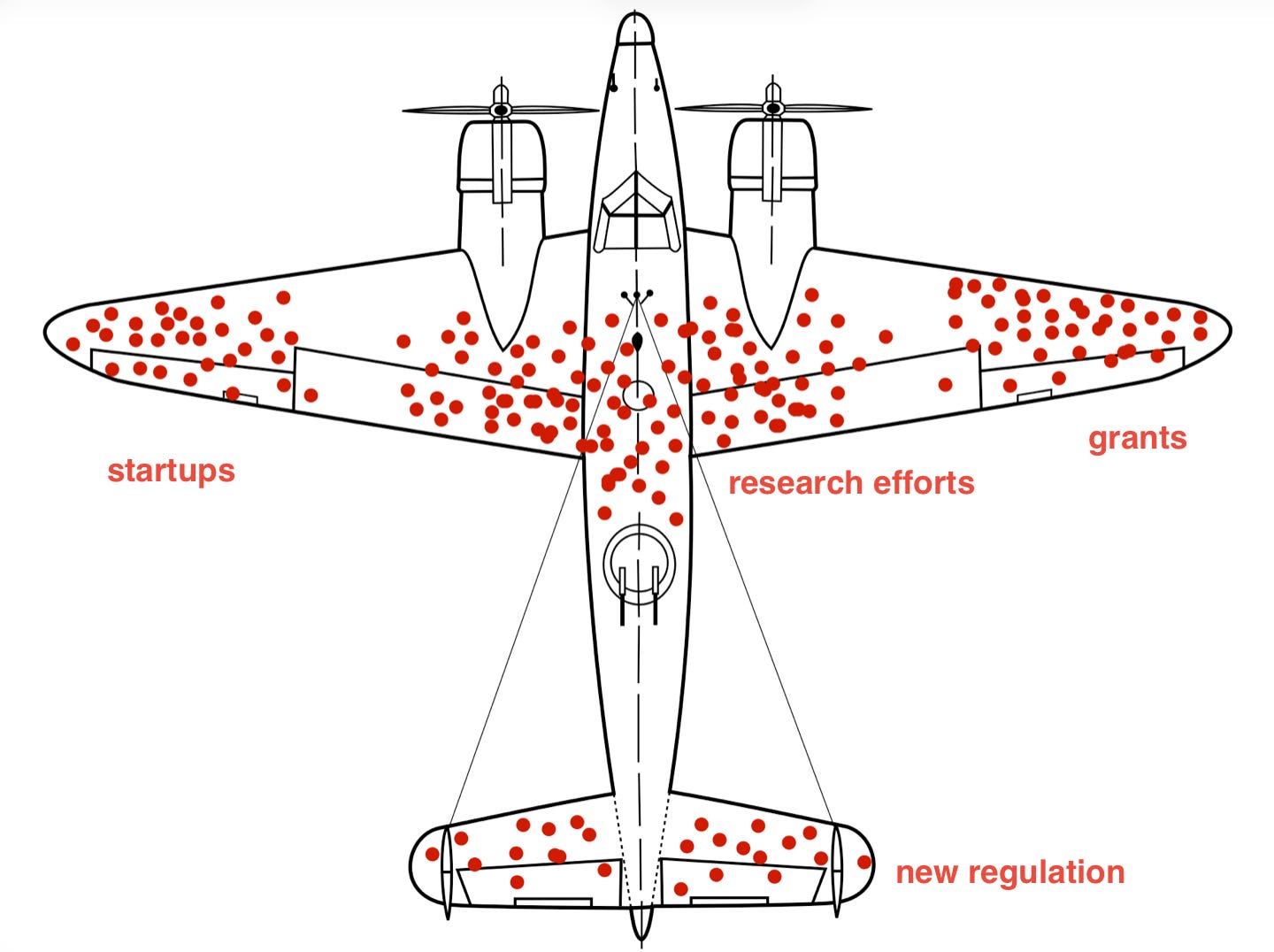

That starts with roadmapping. What challenges will arise from growing AI capabilities? This is going to mostly come from asking experts — the people close enough to the frontier to see what’s coming, but often too constrained by institutional roles to act creatively given what they see. It sounds obvious, but trust forecasters who have consistently been right about this, not the canonical experts.

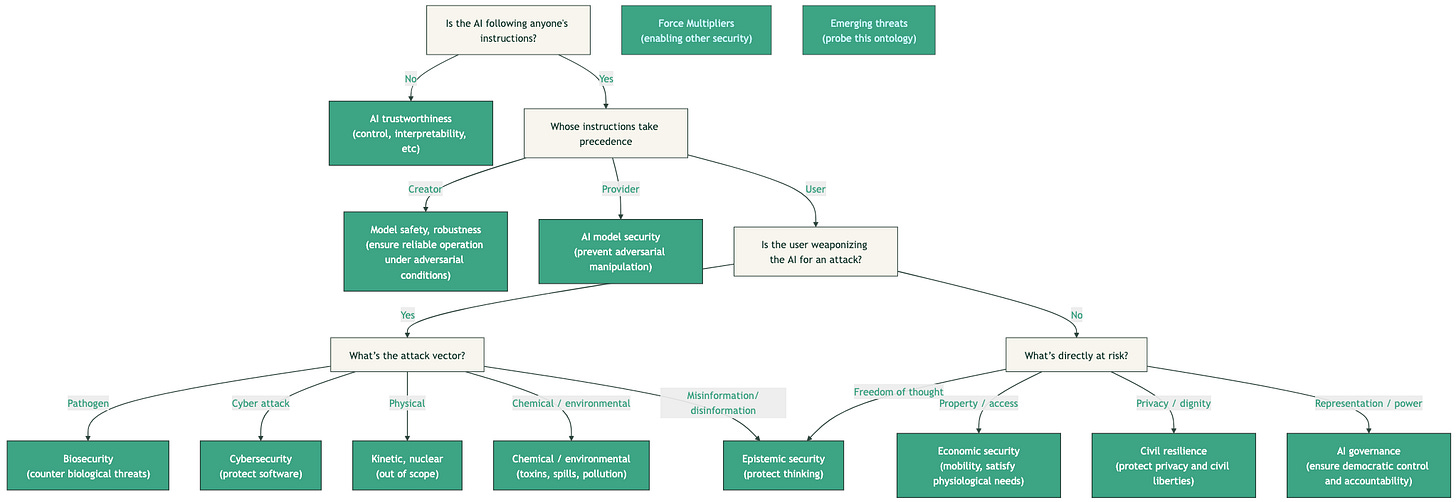

For each of those challenges, identify a reasonable subset of the world — likely a subset of the economy — that you can model with reasonable confidence. When I went through this exercise to generate this tree, I started with a normative premise: “We want AI that follows instructions and doesn’t cause widespread, catastrophic harm.” That decomposes quickly into concrete, industry-specific goals — how to make an AI follow instructions, whose instructions, what kinds of harm exist and how we stop them. For a specific example, biotechnology is a fairly well-defined industry, with clear industry players, predictable roadmaps, and a sea of investors, entrepreneurs, customers, and researchers. If you worry about a particular biosecurity risk from AI, it is challenging but straightforward to list the set of mitigations and the relevant adoption quorum for that mitigation to be successful. The “subsets of the world” are the green boxes in that tree, each inheriting something like a goal or win condition.

Decide how you want to change the future.

Once you’ve identified a relevant risk/industry1, figure out what it would look like to actually mitigate the risks — what you want the end-state to look like once a given AI capability has emerged. If your intervention can be quick, you won’t have to predict the shifting landscape, but if your intervention will take time, be sure to include forecasts of where various trends will leave the world you’re trying to change.

Rather than rejecting or ignoring those trends, I find it’s easier to envision and describe what defense dominance looks like compared to eliminating risk entirely – the goal is to introduce new tools, practices, knowledge, or affordances that make (otherwise destabilizing) new AI capabilities asymmetrically beneficial to defenders of civilization. If you can paint a clear picture, it’s easier for others to check feasibility, spot holes, or identify the role they want to play. Defense dominance is like a castle: very hard to attack a castle with another castle, but very easy to defend land. Formal verification has the same property — if you have tools that help you define security and privacy and prove that software has those properties, it’s very hard to use those same tools to attack the security or privacy of other actors. We created an example of what an early draft roadmap looks like just for cybersecurity here, though others have made even more progress proposing defensive equilibria for other areas; for epistemic security, here’s a list of possible interventions with a full defense-dominant scenario sketched out here.

From those roadmaps, you draft high-leverage proposed solutions — specific interventions that could enable the risk mitigations you’ve described. Then validate them: Talk to the experts who can say “yes, that’s a real problem” and “yes, that is technically possible.” If they say it’s not, ask them to help you problem-solve. “If you were convinced this is a problem, what do you think is the best way to solve this?” Talk to the users, customers, or stakeholders who, if they adopted your solution, would be sufficient to solve the problem. Ask if they can say “if that existed, I would use it” or “it would solve a problem I’m facing.” If not, find out why. Use those conversations to iteratively improve the solution you are proposing.

In my experience, developing these solutions is genuinely hard, and the existence of a solution that satisfies all your desired constraints is not guaranteed. But the experts almost always understand the problem well, want it solved, and frequently have ideas for solutions they simply aren’t positioned to pursue themselves. Stakeholders, in my experience, are far more likely to say “I wish I could help, but I’m limited in what I can do” than to brush off attempts to solve the problem. These are not people who need convincing that the problem matters; they need someone capable to show up with a credible plan and a willingness to help.

Make it easy for someone to step in.

Once you have a validated solution, think about what that intervention needs in order to attain impact. Asking a company to improve civilizational robustness by creating a slightly different product they can sell to a whole new market may be done once you’ve made a well-framed introduction. Setting up a new focus research organization might need a bit more effort and resources. The resources, as well as what business model it might need to be economically sustainable, inform what legal structure it should take. Then make it as easy as possible for someone to execute on the plan: develop milestones, budgets, team structure, job descriptions, a list of interested funders, and the proposed solution with sign-off from your experts and stakeholders — who become the new team’s advisors and first users.

Lastly, find the people who can execute.

This is the part that is controversial in the SF bay area, land of “fund people not projects” – I claim that strategy and execution are two largely non-overlapping skillsets, and can be done by different people. Some people have both, and some problems can only be solved by brilliant generalists who can adapt to shifting uncertain landscapes. In those problems, it’s better if you’re solving problems for stakeholders who don’t know you exist, because there’s so much uncertainty that you don’t even know who the right stakeholders would be yet. However, if you think things are going to break soon and you can predict how, there’s a lot less uncertainty to deal with. You should start solving those mazes from both ends, which looks like identifying a quorum whose agreement would be sufficient to make adoption of your solution inevitable, and elicit conditional commitment for a solution (if only you can provide it). That quorum’s clear demand signals are the hard part of solving the problem, so you need to be damn sure they know you (and your proposed solution) exist.

That demand signal gives you clarity that unlocks specialization of labor, which in turn gives you more capacity to build better solutions, faster:

More capacity, because the required skillset for each role is narrower, which means there are more people who qualify

Faster, because you’re not waiting for the unicorn generalist. Everybody is hunting for the unicorn generalists: but there are so many items in civilization’s maintenance backlog that we’d run out of mythical creatures!

And better, because a field strategist can pivot to a completely different solution the moment a better one is proposed — something a generalist founder, who will inevitably fall prey to the law of the instrument, cannot do. They’ll only succeed if they happen to propose solutions they personally have the skills to pursue.2

The person turning potential risks into a derisked solution that can be handed off to an identified team for execution is what I call a Field Strategist. You may have seen this call for ‘general managers’ for more of the world’s important problems. I agree with everything in that piece, especially the premise that the most important problems have no silver bullet solutions, and rather require a lot of lead ones. That said, finding a GM is hard, providing them with sufficient resources to solve the problem is hard, and that means scaling this approach to all of society’s problems is likely impossible. I claim the field strategy approach is far higher leverage, since the strategist has ownership only as long as they must, and they parallelize as much as they can.

On a more legible level, the output should look like a proposal that is, by construction, the most compelling case you can make for a given intervention. Take the proposal for a new FRO we’re calling Oath Technologies: this organization will design and build new tools and workflows for understanding and checking AI actions by grounding those actions in mathematical precise descriptions of requirements. The direction is, by construction, the most compelling intervention I’ve come across for making formal verification ubiquitous for verifiable cybersecurity (lots of people are working on autoformalization; no one else is focused on validating specs in this way). Similarly, the founder is the best person I’ve come across to lead this effort. And because I landed on this intervention via the approach I’ve described, it’s easy to trace the causal claim back. Oath FRO makes it easy to validate formal specifications, which is the key gap that must be filled for cheap formal verification, which is needed for defense-dominant and provably correct software, which is needed for cyber-resilience, which is needed for “AI that follows instructions and doesn’t cause harm.”

What I’m asking for.

I need more field strategists who feel that their desire to have a positive impact is overconstrained by the requirements and affordances of existing organizations — people who love looking at Gordian coordination problems and finding a solution that aligns incentives, people who can convince a VP at a Fortune 500 company to conditionally commit to trying a solution if it can be built, or people who always know someone with shockingly relevant expertise. I’m currently raising funding to gather and support them, in what I’m convinced is the most important thing I can be doing. And I think we, as a society, need more people, in more places, to adopt this approach. The problems that don’t fit neatly into any existing fiefdom are, by survivorship bias, the most important ones left. They need someone whose job it is to find them, scope them, and make it easy for the right person to step in and solve them.

If you think you might be a Field Strategist, our job listing is here. If you’ve got a problem you think falls between the cracks, or you’re a funder who wants to back this kind of work, you can email me at evan@atlascomputing.org. We’re a nonprofit, so we’ll keep sharing our playbook on our blog as we go.

Biosecurity risks could be addressed by the Biotech industry.

Cybersecurity is its own industry at this stage.

Epistemic security probably requires interventions in the social media industry.

Relatedly, most FROs have a small number of visionary generalists and mostly consist of subject matter experts with a career of doing roughly X for the last 10 to 20 years, and the FRO asks them to do a rather unusual X’.

[leaving this here: this post won first place on the Manifund Essay Prize 🫡 ]

https://manifund.substack.com/p/winners-of-the-manifund-essay-prize?utm_source=post-email-title&publication_id=1491799&post_id=196046200&utm_campaign=email-post-title&isFreemail=true&r=7dv5v&triedRedirect=true&utm_medium=email

Great writing :)